How growth mindset shrank

Not long ago, growth mindset was described as life-changing. Now it's just a minor factor that might--or might not--help your kids' education. And that's okay

People can change. That’s the basic idea of “growth mindset”, an idea which is now utterly unavoidable in any educational context: schools train teachers in how to encourage the idea in kids; kids do workshops in how to improve their growth mindset; charities hand out big grants for more research on it; endless books are written about how to harness it in your everyday life.

You can see why the idea—which originates with Stanford University psychologist Carol Dweck—is so popular. It’s an uplifting message: if you aren’t doing well at some task, most obviously at school, then you can work hard and change your brain to do much better at it. The opposite of a growth mindset is a fixed mindset, where you believe that people are stuck with a particular intelligence level and skill level and can’t ever improve themselves. It’s debatable whether anyone really holds this extreme view, but proponents of mindset argue that people vary in the extent to which they believe in growth.

Perhaps you know Dweck’s bestselling 2006 book Mindset. She claimed that if you read it…

[y]ou'll suddenly understand the greats—in the sciences and arts, in sports, and in business—and the would-have-beens. You'll understand your mate, your boss, your friends, your kids. You'll see how to unleash your potential - and your children's.

Or perhaps you saw Dweck’s 2014 TED Talk (5 million views on YouTube; another 14 million on the TED website). It’s all about the power of “yet” - encouraging people to believe that they can, in future, reach their goals, even if they can’t yet. You can’t answer that question on the maths test… yet. You can’t play that song on guitar… yet.

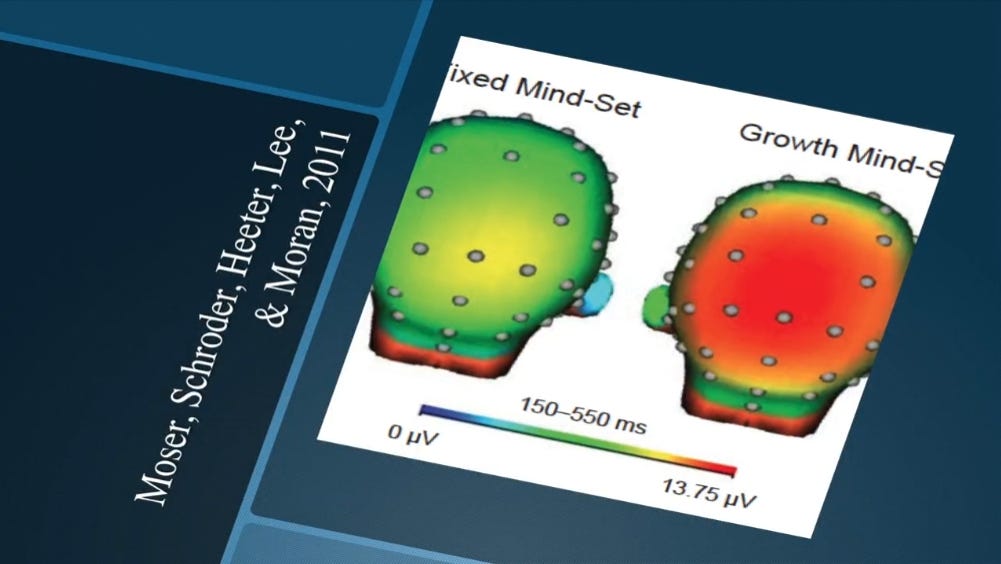

As Dweck explains in the talk, the idea of “yet” can have big effects on your brain. Dweck includes a slide (below) of EEG measurements of brain activity after someone has made a mistake in a laboratory task. Here’s how she describes it:

On the left, you see the fixed-mindset students. There's hardly any activity. They run from the error. They don't engage with it. But on the right, you have the students with the growth mindset, the idea that abilities can be developed. They engage deeply. Their brain is on fire with “yet”. They engage deeply. They process the error. They learn from it and they correct it.

One brain is green, and the other is red. What more could you possibly need to know? Well... I looked up the original paper: it had a sample size of 25 people, and the errors were on a task where they had to identify the central letter in rows like this: MMNMM, MMMMM. Apparently the EEG showed the size of a certain type of brainwave that’s been associated with paying attention to errors was larger if people scored higher on a growth mindset questionnaire.

The thing Dweck said about “they learn from it and they correct it” is, I think, her somewhat glossed description of what’s actually in the paper, which is that the students tended to get more correct answers after an error than after a correct answer, particularly so if they scored higher on the growth mindset questions, p = .03. I guess you’ve got to dumb it down a little in a TED talk, but you’ve got to wonder if the very meagre amount of data here really justifies Dweck’s sweeping statements.

More generally, you’ve got to wonder if Dweck considered whether the results of this very small, to my knowledge unreplicated, study were really ripe for publicising to literally millions of people - or whether they were chosen purely because of that powerful illustration of the green “hardly any” brain and the red “on fire” one.

But never mind that. In the peroration of her speech, Dweck says the following:

Let's not waste any more lives, because once we know that abilities are capable of such growth, it becomes a basic human right for children, all children, to live in places that create that growth, to live in places filled with "yet" [my italics].

There aren’t many psychological concepts that constitute “a basic human right”, where if we didn’t focus on them we’d be “wasting lives”. This stuff is a big deal!

No wonder that, in a 2015 article recommending policies to promote growth mindset, Dweck and her colleagues lamented that growth mindsets were “not yet[!] a national education priority”, and proposed that the US should:

[e]stablish academic mindsets as one of the major issues in education identified by the Department of Education.

Remarkably, growth mindset research has gone even further than national education priorities and basic human rights. In what must count among the most remarkable overreaches of psychology research, Dweck and her co-authors claimed that growth mindset could help solve the Israel-Palestine Conflict. This is real. It’s worth taking a little detour to describe it, just to show how far beyond the school classroom—and beyond ridiculous—this idea has gotten.

Growth mindsets for peace

The first study on this question was published in 2011 in no less august an organ than the journal Science, and was titled:

Promoting the Middle East Peace Process by Changing Beliefs About Group Malleability [my astonished italics]

It had four sub-studies. In the first one they showed that, among Jewish Israelis, the belief that “groups can change” was correlated (r = .3) with being more positively-disposed to Palestinians. Then they did three intervention sub-studies which tried to instil that “group malleability” belief by having people read a passage about how science shows that people can change.

In the first study they found that of the total n = 76 Jewish Israelis, those who got the “malleable” intervention (they don’t say how many) were more likely to have a positive attitude to Palestinians compared to those who were told that groups had a fixed nature, p = 0.02. Then, in the second study they found the same thing for n = 59 Palestinian-Israelis, p = 0.03. Then they found the same thing for n = 51 Palestinians living in Palestine, p = 0.03.

When you see the very borderline results of these interventions, which are consistent with either p-hacking or just very weak evidence (and this was 2011, so there’s no pre-registered analysis plan we can check), you do wonder about the title I quoted above, and also about why Dweck felt she should promote the study in so many places, including in a Scientific American piece about “The Remarkable Reach of Growth Mindsets”, and in an American Psychologist piece where she did at least admit that growth mindset might not be the only solution to the Israel-Palestine conflict:

…conflict in the Middle East will be around for a long time to come, [but] it is important to know that the negative attitudes are not frozen.

Dweck and her colleagues did a follow-up study more recently, where they recruited 74 Jewish-Israeli and 67 Palestinian-Israeli school students aged around fourteen, and had half of each group do another growth-mindset intervention, which included the following:

…participants learned about changes that occurred in groups throughout history and about leaders like Gerry Adams and Martin Luther King Jr. who believed in groups’ ability to change, identified these changes, and were able to amplify these changes.

Hold on a second - Gerry Adams!? The man who is very credibly accused of orchestrating a terror bombing campaign that killed hundreds of innocent civilians, and personally ordering specific murders?! It is, to say the least, surprising to mention him in the same breath as Martin Luther King Jr. - but I suppose it’s true that Adams is an example of “change”, in that he changed from being an active terrorist to a retired one.

Anyway, they compared the children who’d had the mindset intervention with those who’d had a control intervention about coping strategies. They were given two collaborative tasks to do, in groups of mixed Jewish-Israeli and Palestinian-Israeli kids. The first task was a game where they had to step into a circle to touch certain numbers on the floor, but weren’t allowed to talk - it was supposed to test their nonverbal communication. The second was to see how tall the group could build a tower out of pieces of spaghetti, tape, and other objects.

The results? Here’s a sentence I could never imagine typing before I read this paper: learning about Gerry Adams (among other things) led to the Israeli and Palestinian children building a 59% higher spaghetti tower, p = .01. They were also, to quote the authors, “somewhat” quicker on the circle task, but the test had p = .06, so if you’re playing the statistical-significance game you can’t have this one.

Despite what I’d hope is the patent, obvious absurdity of this research (if it hasn’t sunk in yet, just re-read the italicised sentence above and consider how close this highly artificial setting is to the reality of a decades-long and deadly religious, ethnic, and national dispute), it does still get the odd reference from the mindset proponents. For instance, in a 2019 review article, Dweck wrote that:

Although this is just a beginning, these initial findings are promising and may provide insights for future conflict resolution efforts.

Good luck with that.

Honey, I shrunk the effect size

That 2019 review article is a collaboration between Carol Dweck and one of her colleagues, David Yeager, who’s a professor at UT Austin. It’s an unusual article - a summary of research from Dweck’s perspective as the originator of the field, and then from Yeager’s perspective as someone who came in more recently and started doing bigger, better studies.

Yeager, for instance, is the lead author of an impressive 2019 Nature paper (on which Dweck is a co-author) that tested the effects of an online growth mindset intervention on over 6,300 students aged fourteen to fifteen, at 65 different schools. They found that, on average, the students’ test scores were improved by .11 standard deviations by the hour-long intervention—which promoted the idea that people can change their ability levels with effort, and which thankfully didn’t include anything about the Irish Republican Army—with bigger effects in schools that had more supportive norms for learning.

The Nature paper contains a section that argues that, in the context of educational intervention research, effects that would ordinarily be considered quite small are actually large. This is also a point made in the collaborative review paper, where Dweck and Yeager argue that since mindset research has moved out of the lab and into the real world, we should expect smaller effect sizes.

I have mixed feelings about this. On the one hand, I agree that effects of smaller size are to be expected in the real world; I agree that large effects, particularly for psychology-based interventions, but for tons of other things too, generally don’t exist in educational research. There are lots of small-effect variables that add up to affect school performance—a little bit of anxiety here, a little bit of motivation there, a little bit of teacher influence, a little bit of socioeconomic background—and it’s important to work them out.

But on the other hand, they are small effects: in an absolute sense, the growth mindset intervention simply isn’t producing massive or life-changing jumps in school achievement. Saying that we should just call small effects “large effects” is not only self-serving on the part of the growth mindset proponents, but it stops us from internalising the message that small effects are fine, and are to be expected in certain areas of research.

Incidentally, the conclusion of a 2018 meta-analysis of all the available growth mindset research at the time was also that the effects were real, but generally quite small. Looking at 273 studies, growth mindset correlated with school performance, but not a huge amount (correlation of r = .10); and across 43 growth mindset intervention studies, the interventions improved school performance, but not a huge amount (effect size of .08 standard deviations).

Since then, as far as I’m aware the two most important studies are Yeager’s online-intervention study in Nature, and another 2019 study run by the UK’s Educational Endowment Foundation, which tested a teacher-led growth mindset intervention given to more than 4,000 ten-to-eleven year olds in England. In this one—also a high-quality piece of research—the effect size of the intervention on school grades in maths, reading, and writing was… absolutely zero. It had no effect at all.

So, do mindset interventions only work in the US? Only online? Only in older adolescents rather than younger kids? We don’t know yet. We should be open-minded, but in all the wrangling over the what and where of the effect, one thing is for sure: growth mindset doesn’t have the enormous effect that it sounds like it has in Dweck’s TED Talk.

In all this more recent discussion of better-quality studies, some of which she has herself co-authored, Dweck’s earlier statements are the enormous elephant in the room. Remember the “unleashing your potential”? Remember “basic human right”? Remember “national education priority”? To the millions who watched her lecture and read her writings, these aren’t descriptions of small effect sizes. They’re not even descriptions of small effect sizes that, in context, are actually quite substantial. They’re descriptions of something absolutely crucial, even life-changing. Combine that with the idea that this is an effect so powerful that it can even “Promote the Middle East Peace Process”, and it’s little wonder growth mindset caught on in such a big way in schools.

Look, I’m not saying there should be a big grovelling apology for the massively overhyped claims in the past. I’m happy to see that growth mindset has been cut down to size and is being talked about and debated more realistically, even by those who are strongly predisposed to discuss it positively. But it should be on the record that—if it turns out that growth mindset works, for some people, somewhere—this is a story of a small baby rescued from a heck of a lot of bathwater.

Indeed, growth mindset could be a classic case of…

The Decline Effect

Before the Replication Crisis, there was the Decline Effect. This was the topic of what turns out to be an incredibly prescient New Yorker article from 2010, by the science writer Jonah Lehrer. Yes, Lehrer is the guy who made up quotes from Bob Dylan, plagiarised himself, and made various scientific errors in his writing, and now very much counts as “disgraced”. But regardless, it’s quite uncanny how much that article, with its subtitle “Is there something wrong with the scientific method?”, looked like the replication-crisis pieces which began to pop up everywhere around two years later.

The decline effect is the idea that scientific findings get smaller over time. That is, initial findings tend to report effect sizes that then crumble away—sometimes to nothing—with subsequent research. You can see how this presages the replication crisis, which is all about later scientists being unable to find the same, or the same-sized, findings as earlier ones.

The idea doesn’t originate with Lehrer, though. As with so many great things, it comes from parapsychology, the study of extrasensory perception and other psychic powers. It was first noticed within individual experiments: people who appear at first to be showing psychic powers (on tasks like guessing the cards Bill Murray has at the start of Ghostbusters) often trail off. But it was also noticed across attempted replications of experiments. Some parapsychologists have suggested that the reason for replication struggles might be that the participants, the experimenters, and the reviewers—and even the people who later read the paper!—might have mysterious time-travelling psychic-scepticism powers that can nullify the effects. Needless to say, this has been a bit tricky to prove.

In the New Yorker piece, Lehrer gives reasons for the decline effect that are somewhat more realistic, and that are also very familiar to us in 2022:

Publication bias: positive results and big effects are more likely to be published, which makes the initial studies appear to have much bigger effects than is the reality;

p-hacking (though back then it didn’t have that specific name): scientists “lean” on the results to make their initial studies look like they have bigger effects, and future studies can’t find the same thing;

Randomness: maybe the initial discoveries were just flukes, or fluke-ishly large effects which later turn out to be smaller (this only really works when combined with publication bias, because you’d also expect lots of fluke small effects that later turn out to be larger).

Now, having considered growth mindset, we can add a new type of decline effect, which brings to mind the “hype cycle” that’s often discussed in tech:

Initial massive exaggeration of the importance of an effect gives way to more realistic, much smaller effects (which might still be useful) after people start doing better studies.

This type of decline effect is a good thing. Sure, it’s too late to put the growth mindset hype genie back in the bottle now that it’s spread to every school in the land. And even if the better studies turn out positively, it wouldn’t retroactively justify the previous hype, or the daft, ultra-naïve overextension into solving international conflicts. But even after all these mistakes, this is an encouraging example of psychology research getting serious - and maybe even those who spread the initial hype getting serious too. It’s genuinely good to see.

After all, isn’t it nice when people show they can change?

Image credits: top image: Getty; slide image: TED.

My take on the growth mindset effect wasn’t so much “is it true?”, but “is it a helpful belief?”. If a teacher believes in the concept of a fixed mindset, then what’s the point of teaching less able kids? Similarly if the child believes in a fixed mindset then they may as well give up if they don’t get it right.

Thinking about it that’s the theme behind Angela Duckworth’s “Grit”. Might be worth looking at the data behind that one too!

Hi Stuart, just a note from an old acquaintance - after your name popping up unexpectedly twice in the last week I had to let you know that each time I hear of you (radio 4 soundbites, links to this article on teachertapp) I can't help but giggle at my memories of sharing "enthusiasm" for certain lectures in the Reid Concert Hall and a background in brass banding! Keep up the good work - your writing here made me laugh out loud as much as I remember from your commentary on organ pipe blowing techniques... from Tom Leather (now teaching in an English secondary school where growth mindset had an astronomical rise and a very quiet disappearance)